Does HCI/UX research rely more on qualitative or quantitative measures? How many participants do we typically involve? And for how long? I had a look at 1014 studies published at the CHI2020 conference to investigate which research methods are typically used.

Data

First a bit of context: at CHI2020 a total of 756 papers made it through the peer review process. Of these publications, the vast majority (87.1%) contain one or more studies with human participants.

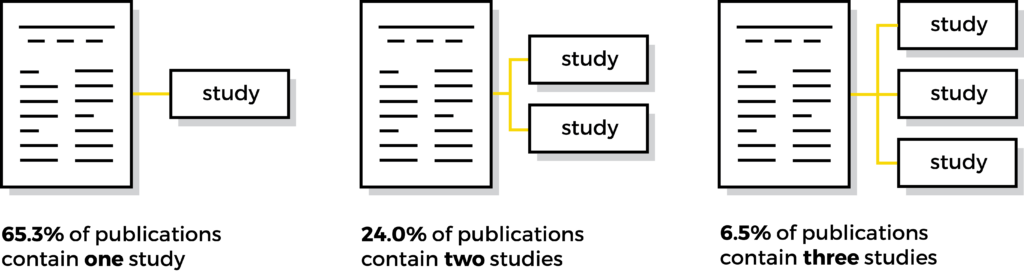

On average, publications describe 1.5 studies, giving a total of 1014 studies involving participants. Most publications contain one study (65.3%), some two studies (24%), three studies (6.5%), and a very small remainder of four or more studies (for example a series of related lab studies).

Qualitative or quantitative?

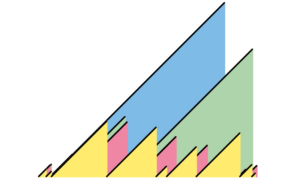

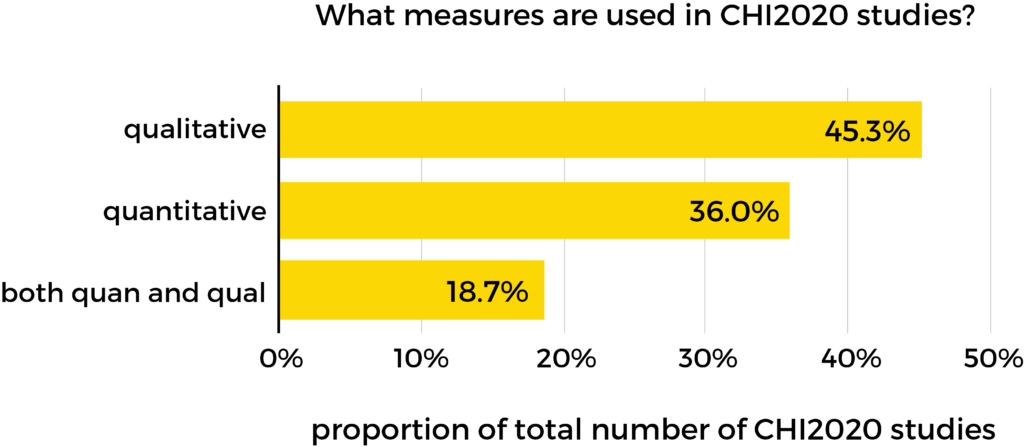

Of the 1014 studies, 459 reported on qualitative measures (45.3%) like themes and quotes. A further 365 reported on quantitative measures (36%), like statistics. Another 190 studies covered both (18.7%).

HCI/UX research is a multidisciplinary field which is known to make use of methods from a range of different domains, which is clearly reflected in this spread across qual and quan.

Methods

Let’s have a closer look at the research methods. You can find a definition for each method in the notes at the bottom.

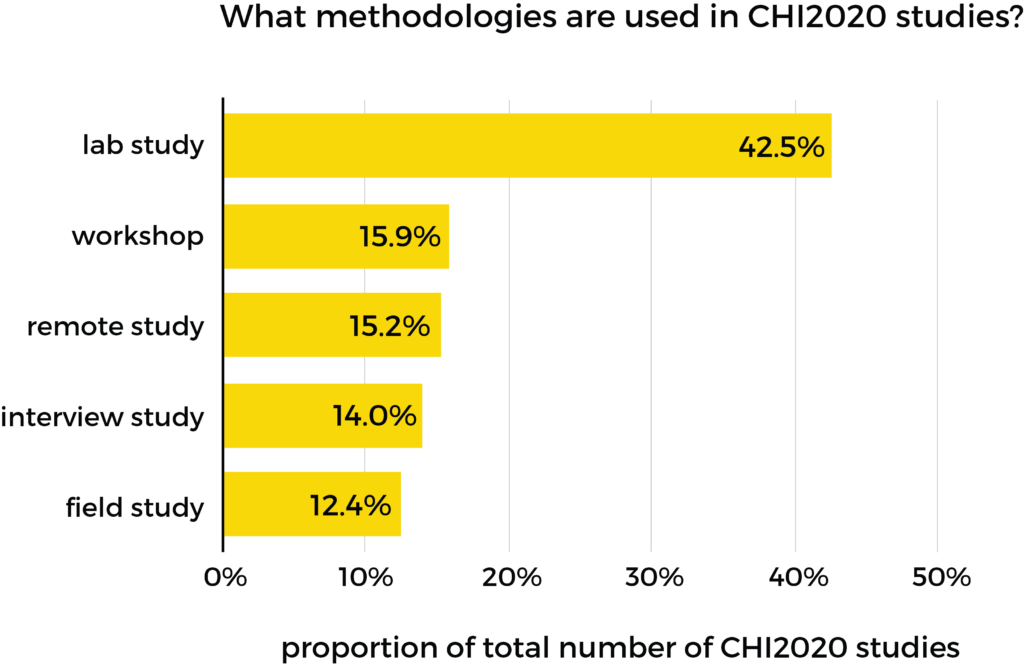

Lab studies are the most popular method by far, with 42.5% of studies using this approach. I did a similar analysis in 2018, and this heavy use of lab studies has remained similar over the years. Other methods are roughly comparable in their usage: workshops, remote studies, interview studies, and field studies all make up between 12.4% and 15.9% of studies.

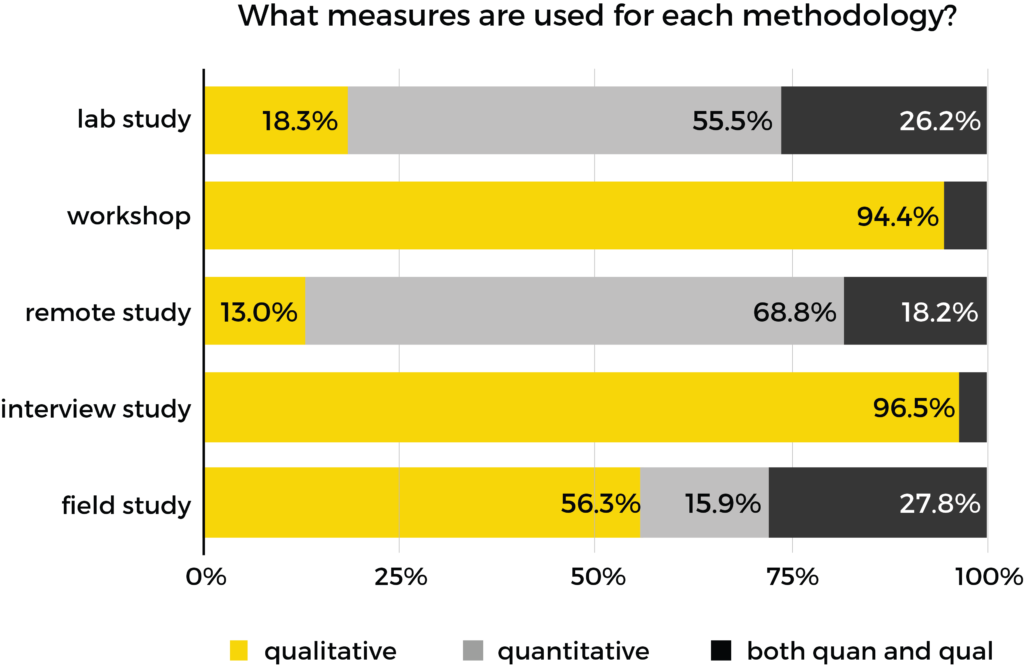

So how about the measures that are used within each of those methods?

Some of the methods are clearly qualitative: interviews and workshops almost exclusively use a qualitative approach in the analysis. Field studies are more mixed, and the majority of both remote studies and lab studies rely on quantitative analysis.

Number of participants

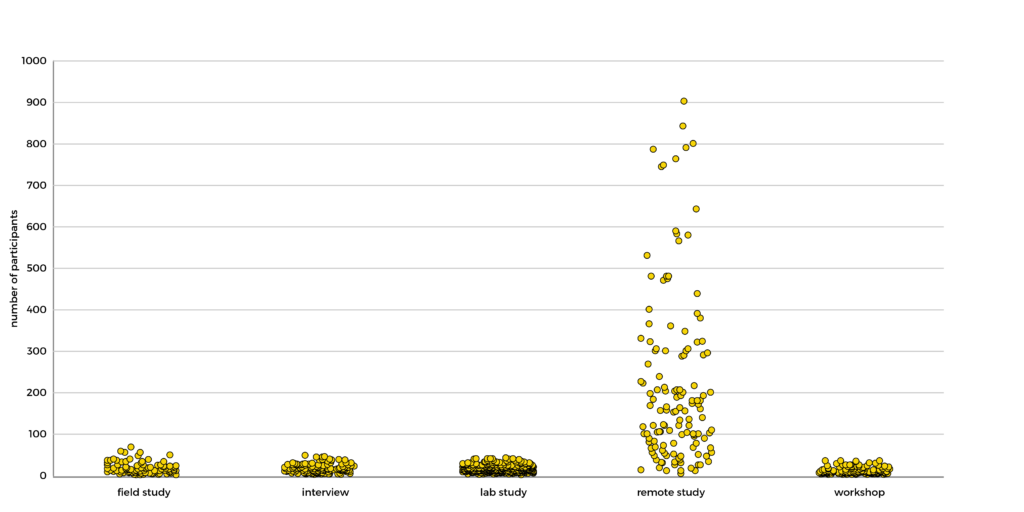

The choice of method also affects the number of participants involved (or vice versa: you may choose a certain research method because of restrictions around the number of people you can involve).

In 23 studies it was not clear how many participants were involved (for example “a team” or “a class”), but all other publications listed the exact number of participants. For this visualisation I’ve briefly removed 92 outliers, primarily consisting of massive remote studies that skew the data.

As is obvious from the graph, large studies are typically remote studies. Often these are task-based studies conducted on thousands of Amazon Mechanical Turk workers, or surveys sent to thousands of participants. The largest remote study at CHI2020 was by Facebook: a survey on Facebook with 50,000 respondents. The other methods typically rely on far smaller number of participants, with a median number of 9-16 people:

| Method | Lowest number of participants | Highest number of participants | Mode | Median | Mean |

|---|---|---|---|---|---|

| Field study | 1 | 750 | 11 | 16 | 44.4 |

| Interview | 2 | 100 | 12 | 15 | 18.6 |

| Lab study | 1 | 288 | 12 | 15 | 21.8 |

| Remote study | 4 | 49943 | 100 | 181.5 | 1241.0 |

| Workshop | 2 | 300 | 7 | 9 | 17.0 |

How long do studies last?

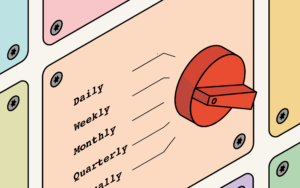

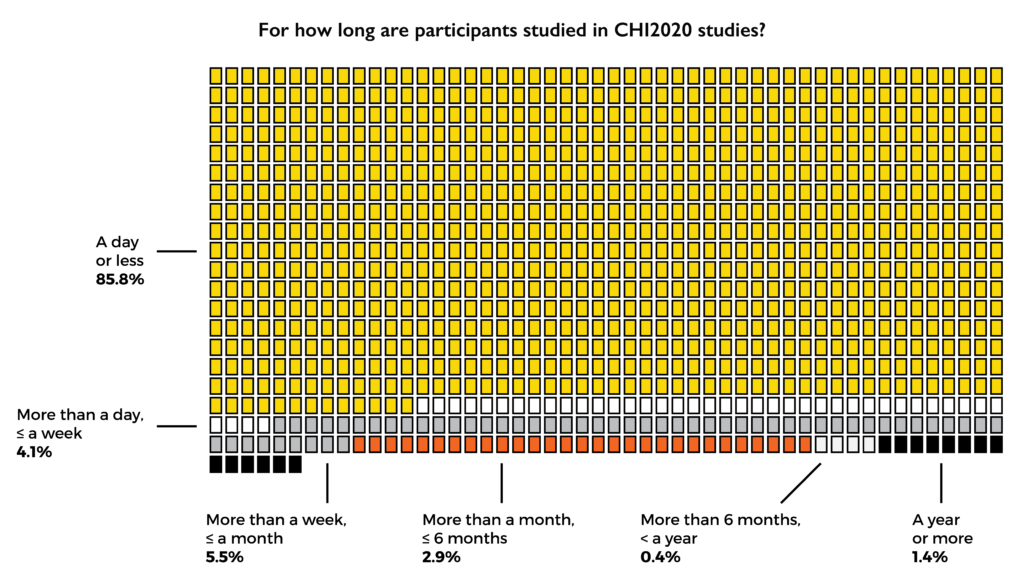

Another key factor in study design is the duration of the research. More specifically: the duration of the involvement of participants. Do you invite people and learn from them in a single session, or do you study them over the course of a longer period of time?

Longitudinal studies are the exception rather than the norm. Over 85% of studies studied participants for a day or less. Often these are 60 or 90 minute sessions: lab studies, remote studies, interviews. Some workshops last a day.

The remaining ~14% of studies involve participants for longer than a day, ranging from several days to several years. The longer studies are typically field studies, where ethnographic research spans more significant periods of time.

Depending on the aims of the research, both short-term and long-term approaches can be appropriate. Nevertheless it is interesting to see such a focus on short-term studies, when so many HCI/UX studies are about new technology or software. In order to study these properly, their study design has to account for learning effects and novelty effects. An over-reliance on short studies risks inaccurate findings, potentially resulting in prematurely embracing or disregarding new concepts.

Final thoughts

This analysis has shown HCI/UX research relies on a mix of qualitative and quantitative methods, with a large proportion of studies consisting of lab studies. More importantly: in the vast majority of studies (over 85%) we involve participants for a day or less.

What does this ephemeral approach mean for what we actually learn? Given that a large number of studies aim to assess the potential of highly novel technologies or interactions, how do learning and novelty effects impact the results of our short studies?

When HCI/UX research relies so heavily on lab studies, can we realistically say we are comprehensively studying human-tech interactions – when many of those interactions take place over long periods of time in real-world contexts?

Limitations, notes, disclaimers

- These studies are the accepted publications. We don’t know to what extend they represent all the studies that were submitted. Maybe specific methods are more likely to get accepted, maybe studies with a certain number of participants are more likely to get accepted, who knows (the editors might know)

- CHI2020 is largely an academic conference, so whether these findings apply to more applied settings is TBC

- I used the same definitions to classify studies as I did in 2018, but I merged the ‘remote study’ and ‘survey’ classifications as they were often impossible to differentiate:

- Field study – defined as a study outside the lab, in the ‘real world’. Anything from asking people to use an app for a few weeks to long-term ethnographical studies

- Interview – defined as a study that is primarily informed by face-to-face or phone conversations consisting of structured or semi-structured questioning

- Lab study – defined as a study that takes place in a controlled environment, often consisting of short sessions where participants carry out defined tasks in the presence of the researcher

- Remote study – defined as a study carried out via some kind of online platform (for example Amazon Mechanical Turk or Prolific Academic), where participants are recruited to remotely carry out defined (unmoderated) tasks or a questionnaire

- Workshop – defined as a study where a group of co-located participants discuss/carry out tasks/brainstorm/create/etc.

- I used the list of publications in the ACM Digital Library search results, with a filter applied for ‘research articles’ and ‘CHI ’20’

- Yes, doing this analysis was a bit of a nightmare

Original tweet: